My Cloud Resume Challenge

The Challenge

During my AWS Solution Architect certification preparation, I sought opportunities to apply my knowledge through hands-on projects. I came across the "#TheCloudResumeChallenge", a popular initiative that provides a practical platform for cloud newcomers to gain experience with various technologies.

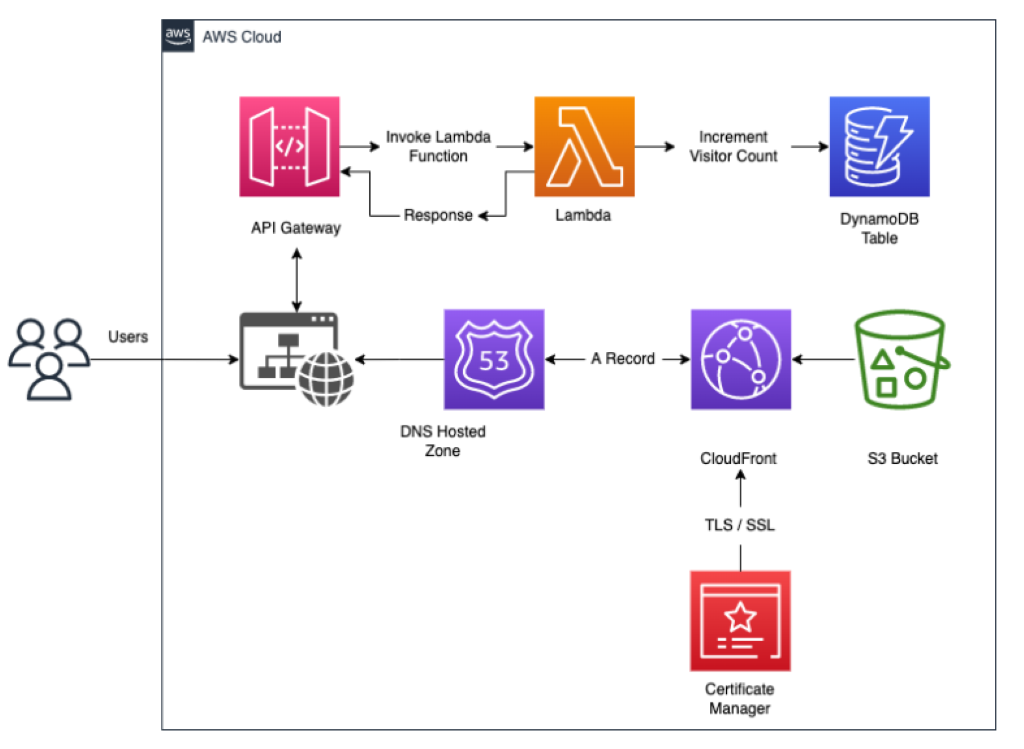

This is below the architechture that I put in place for this project.

Website :

I began my search for a suitable resume template online, only to be disappointed by the extensive customization required. Frustrated with this, I decided to take matters into my own hands and build my own resume website from scratch, guided by YouTube tutorials.

Frontend :

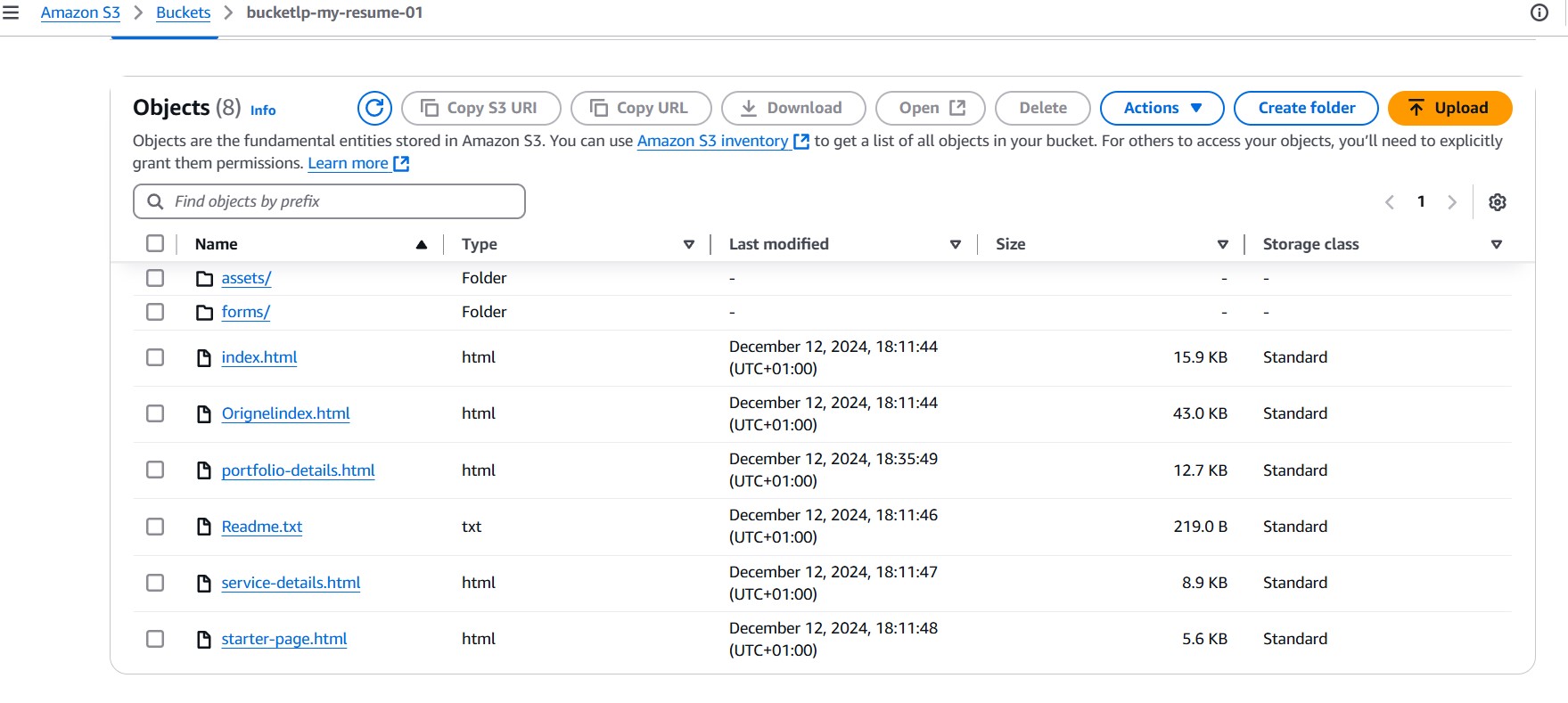

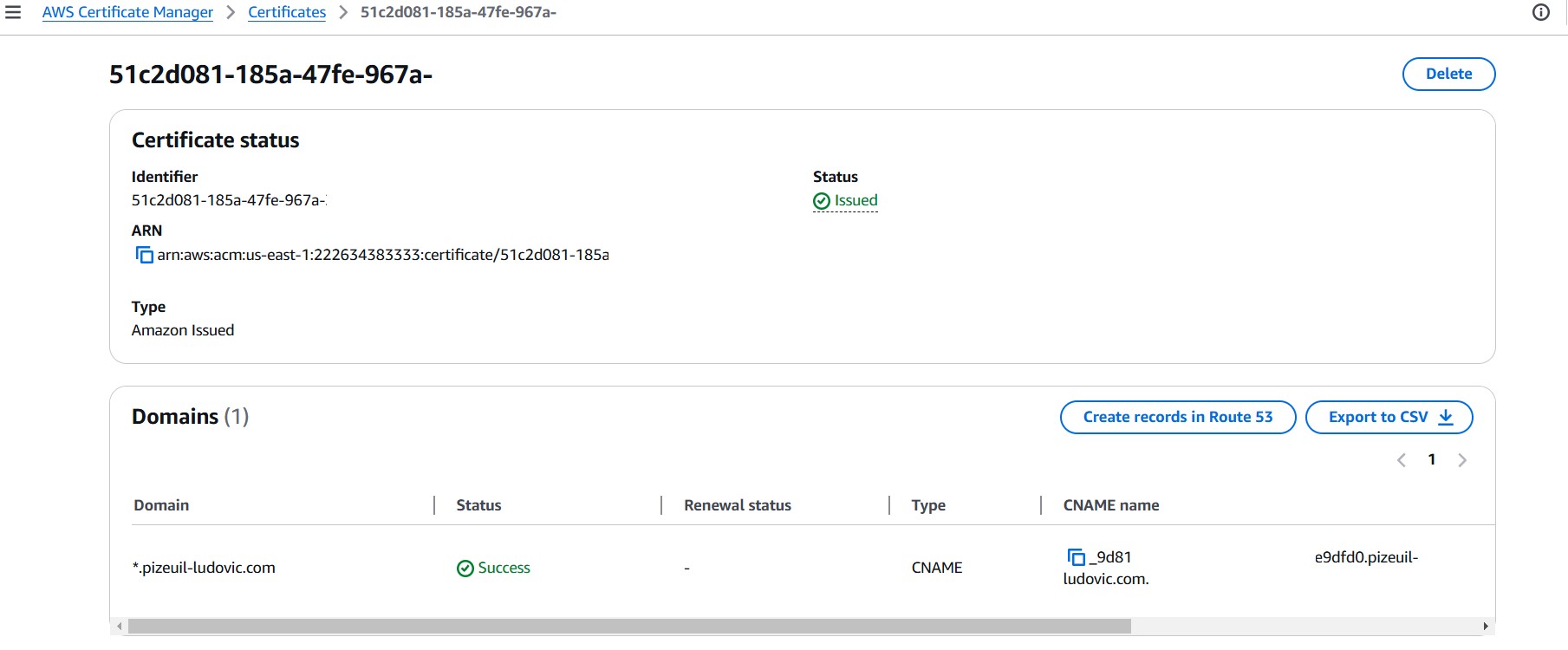

To deploy my resume website, I began by uploading its static files to an Amazon S3 bucket for storage and hosting. To make it accessible online, I registered a domain name and configured DNS records in AWS Route 53. To ensure secure HTTPS access, I obtained an SSL/TLS certificate from AWS Certificate Manager. To optimize global delivery and reduce latency, I created an AWS Cloudfront distribution and associated it with my domain name using additional Route 53 CNAME records. So the frontend architeture flow till this step was

Route 53 → Cloudfront → Certificate Manager → S3 bucket

Backend :

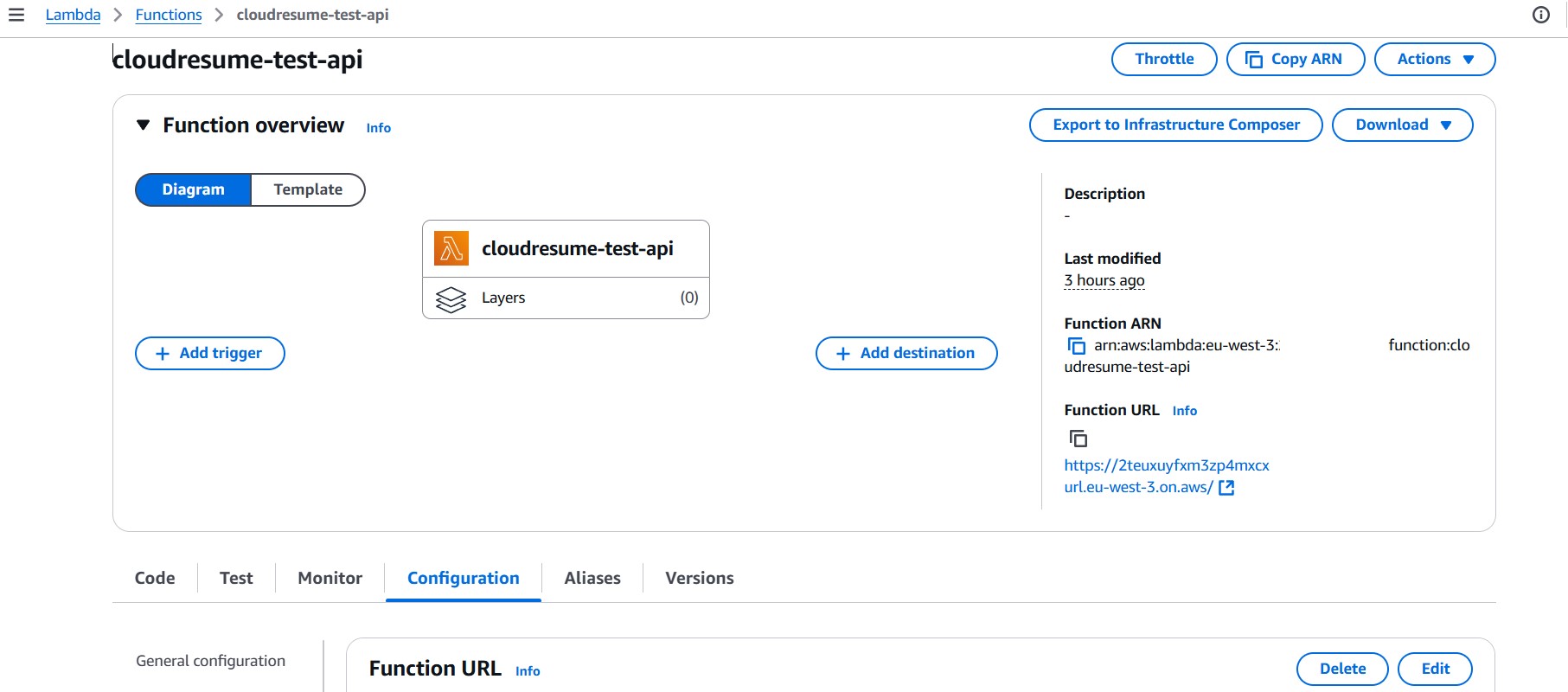

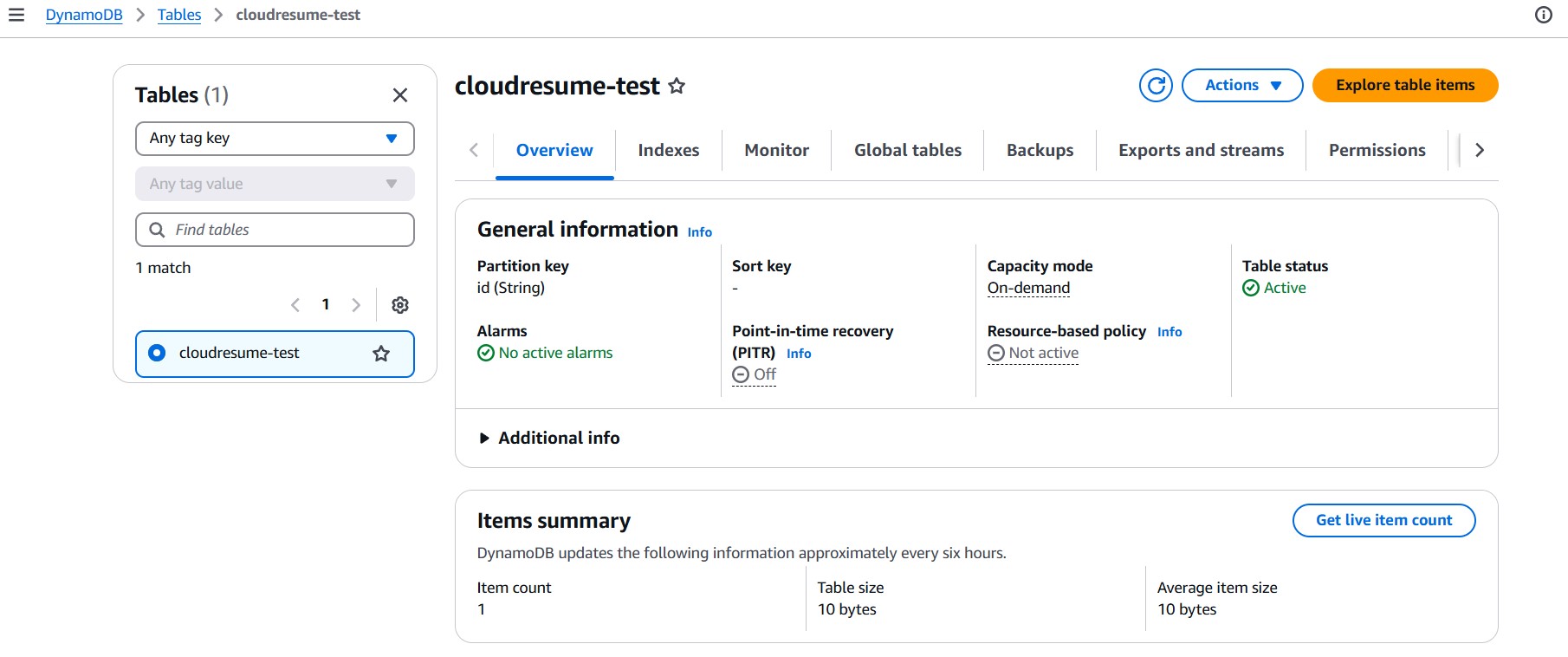

My goal to this challenge is to track website visitor counts so I needed a backend infrastructure to store and update this data. I chose AWS DynamoDB as our database solution, a highly scalable NoSQL database that can efficiently handle frequent updates. To automate the process of updating the visitor count, I implemented an AWS Lambda function. This serverless function, written in Python, interacts with the DynamoDB API to increment the visitor count. This backend architecture ensures that the website can accurately track and display visitor numbers in real-time. So the backend infrastructure flow till this step was

Lambda → DynamoDB

Connect Backend and Frontend :

To connect the frontend website with the backend infrastructure for fetching and updating visitor counts, we utilized AWS API Gateway. This service allowed us to create a secure HTTP API that masks the underlying AWS DynamoDB and Lambda services, ensuring a protected communication channel. So the final flow till for the infrastructure was

Route 53 → Cloudfront → Certificate Manager → S3 bucket → API Gateway →Lambda → DynamoDB

Project information

- Category AWS Cloud

- Project date 12 December, 2024

- Project URL Cloud resume challenge